From Anecdotal to Evidence-Based

Building a Research Culture at Scale

Opinions are interesting...How I built Assent's UX research practice from zero. establishing infrastructure, methods, tooling, and a culture of data-informed decision-making across a complex B2B platform.

The Problem

When I joined Assent in 2018, there was no research practice. No process. No participant pool. No recruitment infrastructure. No shared tooling for capturing or synthesizing insights. Design decisions were made on instinct and stakeholder opinion, and nobody questioned it because there was nothing to compare it to.

The result was predictable. Teams shipped features they couldn't validate. Stakeholders drove priorities based on gut feel. Design was reactive rather than generative. And the organization had no shared language for how insights should inform product decisions.

This wasn't a tooling gap. It was a cultural one.

A Structured Research Process

I formalized a six-stage research methodology: Define, Frame, Recruit, Collect, Analyze, Report. Each stage has a clear purpose, specific outputs, and tooling to support it.

Define — Establish research context, primary objectives, and alignment to product OKRs. Identify the responsible team, target platform, and product area.

Frame — Structure research questions by theme, articulate the rationale for each inquiry, and identify the desired data points that will inform design decisions. Hypothesis formation happens here, not in synthesis.

Recruit — Segment and recruit participants by demographics, persona, complexity, maturity, and user type. Onboard with clear expectations and access.

Collect — Execute through interviews, usability testing, surveys, and analytics. Maintain a regular cadence of check-ins and feedback sessions.

Analyze — Code qualitative data, score feedback on Impact, Frequency, Feasibility, and Business Alignment. Prioritize and build a feedback backlog.

Report — Present findings cross-functionally through Insights Councils, dashboards, and roadmap updates. Validate prioritization with users when possible.

I created a Research Plan template that made this process repeatable and teachable. Every research project started with a clear framing of the problem, explicit research questions organized by theme, participant segmentation criteria, methodology selection, and a defined reporting structure. The template forced rigor before a single interview was scheduled.

A Research Tooling Ecosystem

I evaluated, selected, and implemented a complete tooling stack:

Dovetail for centralized insight capture, interview hosting, and AI-assisted synthesis. What used to take days of manual coding became minutes.

Lyssna for unmoderated testing, card sorting, tree testing, and prototype validation. Objective data on user interactions, not opinion.

Pendo for behavioral analytics, session replay, and in-product feedback. This was also the primary channel for in-app research recruitment, targeting specific user segments directly within the product.

Each tool had a defined role in the six-stage process. Pendo fed the Recruit and Collect stages. Dovetail owned Collect through Analyze. UXtweak powered validation testing. None of this was ad hoc.

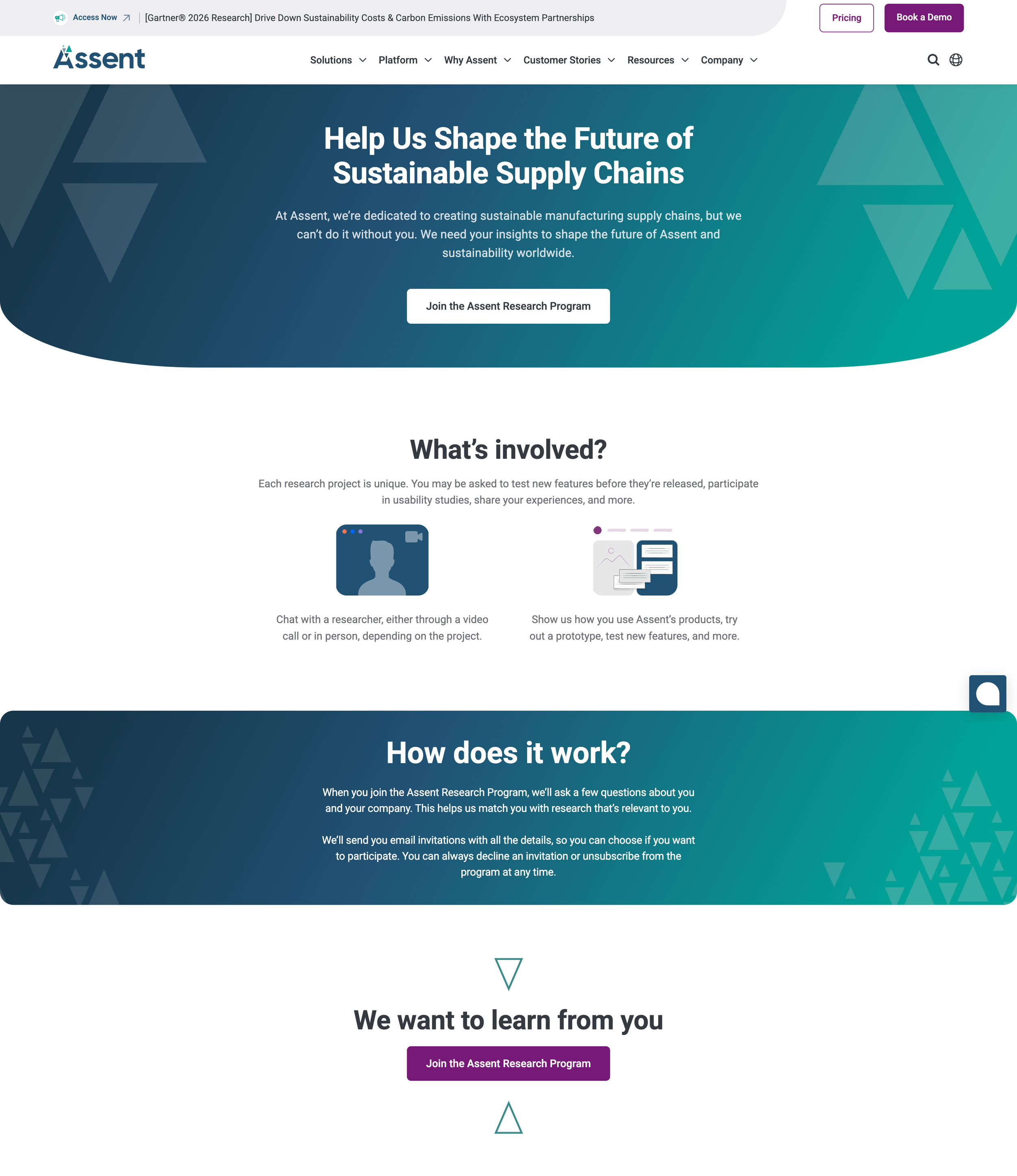

A Participant Recruitment Engine

This was the hardest part. Research without participants is theater. We built a participant pool that scaled to over 1,000 opted-in users, including bu tnot limited to compliance managers, procurement leads, engineers, and suppliers. A dedicated recruitment channel through a public-facing research landing page was created on assent.com, partnered across Product Operations, Product Marketing, and Customer Success to source participants, and established a formal incentive program to sustain engagement.

Recruitment wasn't a single channel. It was a system: in-app interception through Pendo, direct engagement through canned responses and email signature invitations, supplier webforms, and the recruitment page. Participants were segmented by demographics, persona, organizational complexity, compliance maturity, user behavior, and whether they were customers, suppliers, or both. I also built the onboarding structure: access and permissions, expectations, communication strategy, feedback mechanisms, touchpoint frequency, and research period duration. Participants knew exactly what they were signing up for and what to expect.

Team Capability Building

I mentored and worked with designers on research craft: interview protocols, probing questions, bias avoidance, think-aloud facilitation. I gave structured feedback on discovery work, pushing designers to connect insights to hypotheses and success metrics. I celebrated team research contributions publicly.

The goal was never to centralize research in my hands. It was to make every designer on the team a competent researcher.

Impact

Research Pool — 1,000+ opted-in participants across customer and supplier segments.

Structured Process — Six-stage methodology (Define, Frame, Recruit, Collect, Analyze, Report) with templates, tooling, and governance at each stage.

Time to Insights — Synthesis reduced from days of manual coding to minutes through Dovetail's AI-assisted analysis.

External Validation — Featured in Verdantix Product Compliance questionnaire as a best-in-class, multi-method approach to user experience development.

Conversion Discovery — Identified a 1.3% conversion rate issue from 3.1k visitors, triggering a design investigation.

QA Adoption — Epic maps became a required QA artifact for larger objectives.

Feedback Framework — Systematic scoring on Impact, Frequency, Feasibility, and Business Alignment replaced ad hoc prioritization.

Team Recognition — Team recognized as a high-functioning group with a strong discovery reputation across the organization.